After Snowden’s revelations many people are concerned by an omniscient and omnipotent NSA reading their email. NSA reportedly got access to content that was assumed to be encrypted—whatever that means—and that prompted the appearance of a new word in newspapers: “NSA-proof encryption”. This refers to communication tools with strong confidentiality and integrity guarantees; essentially end-to-end (authenticated) encryption following a key agreement using public keys exchanged and/or verified through an authenticated channel. When journalists say that NSA “cracked” some encryption they actually talk about access to decrypted content or to the secret keys, rather than actual attacks on the algorithms. That is, failures come more from privileged access to network nodes or links, from poor OPSEC, software flaws, or from “backdoors”, raher than from old-school cryptanalysis.

That said, we should not conclude that NSA is unable to and never does attack the algorithms, just because it appears to be economically uninteresting for massive spying. High-value targets may be worth the effort of a cryptanalytic attack, depending on factors including

- The return on the offline investment (think one-time things like research, precomputation, hardware, datacenters, power plants, etc.): Will the attack setup be used for only one operation, or for several per year? Will it still be useful in 20 years? Can the investment serve other purposes or be “recycled”? etc.

- The return on the online investment (think the cost of the actual attack: time, storage, power, heat, etc.): Simply put, how much are the target assets worth strategically?

- The number of targets (sometimes a 1-out-of-

attack is

times as fast as the attack on a single target): Can multiple targets be attacked simultaneously? How many?

- The attack model: Do we only know ciphertexts of random data, or of data with known/guessable headers? Do we have access to an encryption or decryption oracle?

- The algorithmic advantage: How fast is the attack algorithm compared to generic attacks, to the best published methods?

Since NSA dramatically lacks transparency on its cryptographic capabilities, we’re left with speculations and educated guesses. So let me give my take regarding the last point for some common crypto algorithms.

MD5

We can find MD5 collisions in seconds and thanks to Marc Stevens we can even find chosen-prefix collisions (something halfway between collisions and second preimages, which can be exploited in multiple ways), but we’re far from general second preimages or preimages (although researchers published a preimage attack on the full MD5 theoretically 25 times faster than bruteforce but requiring about a petabyte of memory). In his counter-cryptanalysis paper from CRYPTO 2013, Marc analyzes the chosen-prefix collision attack used for Flame and shows that it could have been improved. Perhaps NSA or their partners knew of a chosen-prefix collision before Marc’s publications, but the case Flame does not reveal much higher knowledge than the public. I don’t expect NSA to be capable of a preimage attack much better than bruteforce.

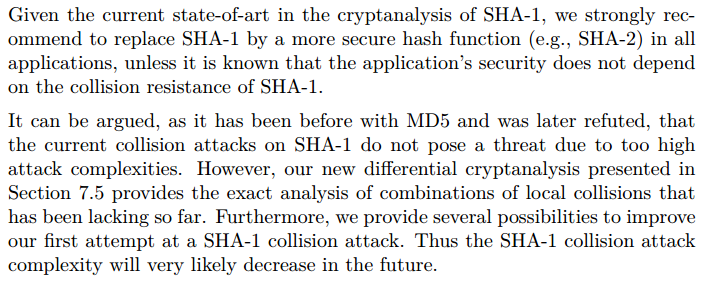

SHA-1

Again the best known results are by Marc Stevens, with a 2-block identical-prefix collision attack with complexity estimated to compression function-equivalent operations, which can be extended to a chosen-prefix collision attack having complexity estimated to

. We can reasonably assume that NSA knows slightly better attacks, and has the capability to compute a chosen-prefix collision within less than a year (especially given the partial parallelism of the attack). However, unlike attacks on encryption, the exploit of a chosen-prefix collision attack to (say) forge valid certificates would directly reveal that capability. But there are probably more surreptitious ways to exploit SHA-1 weaknesses.

This is copied from Marc’s recommendations in his PhD thesis:

AES

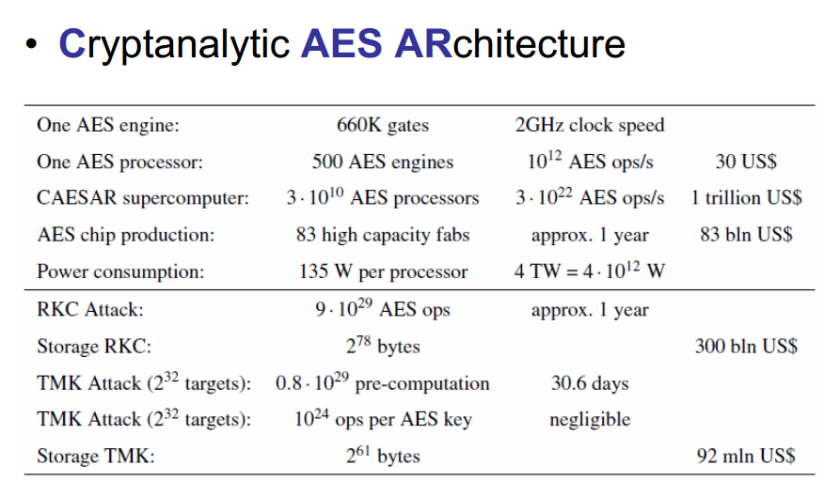

AES-128, -192, and -256 have all been “broken” with banana attacks: differential -multicollisions, related-subkey attacks, or bicliques. The good news is that these attacks have complexities close to that of bruteforce, and work in insane attack models anyway. So we’re left with the generic (parallel) bruteforce methods and I expect NSA to have the same level of progress. Does it mean that NSA can’t “crack” AES-encrypted ciphertexts? Actually no: as speculated by Alex Biryukov and Johann Großschädl, to break AES-128 in one year you just need

- To design a dedicated AES processor with 500 high-throughput AES cores clocked at 2GHz (giving a throughput of about

AES evaluations per second.

- To produce 30 billion units of this design, along with a 10TB storage attached to each processor. You’ll need to build 83 high-capacity fabs ($1 billion each) to get the chips delivered within 30 months.

- To build a few power plants: with 135W per AES processor, the supercomputer requires about 4TW of power (approximately 1000 times the power generated by the four nuclear reactors of the Fukushima Daini plant).

The authors observe that “energy seems to be the main bottleneck”. Ah, and you’ll need the target message to be encrypted under distinct keys. More details in the table below, copied from the presentation of Alex and Johann:

RSA

Daniel J. Bernstein recently discussed how to optimally use NSA’s 65-MW infrastructure in Bluffdale to break 1024-bit RSA with state-of-the-art algorithms. He makes the assumptions that “NSA is not stupid” and that NSA knows of no better attack than we do (optimized number field sieve; in particular, he assumes that RSA will be attacked by factoring its modulus). He concludes that the hypothetical RSA-cracker would have:

- A higher ratio of arithmetic over communication compared to general-purpose supercomputers, in part to optimize the efficiency of linear algebra step.

- Heavy ALU-to-ALU communications to speed-up operations like matrix products or FFT, as opposed to fewer ALU-to-RAM connections that would dramatically increase latency.

- A 2-dimensional architecture (mesh) to speed-up computations with heavy communications, as opposed to a 1-dimensional architecture (ring bus) like that of an Intel Xeon Phi.

- A dedicated architecture reducing the overhead induced by instruction decoding and scheduling, with a dedicated instruction set including vectorized operations.

Is that NSA’s plan of an RSA cracker? Has NSA already factored 1024-bit RSA moduli? I tend to believe that it’s not the optimal way to use their resources, but that’s just a guess. Even more unlikely, in my point of view, is the quantum-computer hypothesis.

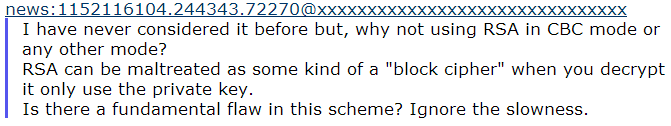

Anyway, if you have to use RSA today for long-term security (if, for example, forward secrecy isn’t guaranteed), there is no excuse for using moduli shorter than 2048 bits, as much as there is no excuse for using symmetric keys shorter than 128 bits (exercise: find the inconsistency here), or for things like this:

TL;DR

I don’t think that NSA cryptanalysts have eons of advance on the rest of us. NSA just has insane resources but even with that I’m not sure that dedicated high-complexity crackers are the best investment compared to general-purpose data analysis engines.

EDITED TO ADD (Jul 25): in this CNET article several cryptographers comment on the feasability of factoring 1024-bit RSA moduli. In particular Eran Tromer was reported to say that “[moving] to 90-nanometer semiconductor technology that was reached in 2005 brings the cost to $1.1 million for hardware that breaks 1,024-bit keys at the rate of one a year, not counting initial engineering and fabrication”.

4TW is about a thousand times more than a typical nuclear power plant generates. So that number might be off!

Sure, my bad, Fukushima Daini’s power is obviously 4GW rather than 4TW, so not 1 but 1000 plants are needed for the attack (the 4TW requirement is correct though). Thanks for catching that!

First, this is a well-written article. That said, with everything that is going on, it seems to make sense to begin asking for (or demanding, or designing into things, depending on who you are), the following: 1) longer encryption keys / ECC, 2) perfect forward secrecy, 3) OTR design and no logs policy/settings, 4) zero knowledge (zero knowledge proofs employed such that no-one can see what files you have, what password you use (regardless of what service we are talking about), where bitcoins come from [Zerocoin comes to mind], etc.) Things that come to mind that seem to kind of walk down this path – that people commonly use… Pidgin with OTR or Adium, riseup, SpiderOak, sonic.net (or services with similar policies). I’m not convinced that the above things mentioned (Pidgin OTR, Adium, riseup, etc etc) actually fulfill all four of the desirable conditions mentioned here. But my guess is that these things could be developed further without great difficulty to meet the stronger and safer conditions to address the kind of problems that we face today, addressing the four conditions, while satisfying completeness, soundness, and zero knowledge.

Great Job!

Reblogged this on Albert Sans – Blog and commented:

Bona reflexió sobre les limitacions computacionals de la NSA, o per altres organismes com la British GCHQ, a l’espionatge i un breu repàs de les vulnerabilitats en la present criptografia.