With all of the hype lately around AI and Large Language Models (LLMs) following the release of demos such as ChatGPT, what tends to get lost are the realities of people trying to use these tools today, not in the future. Beyond asking for recipes in the style of Shakespeare and sifting through manufactured hallucinations generated by the model, are using these tools to write and understand code.

Using these tools in coding tasks is a usage that demonstrates potential. Programming languages are more rigid in construction and less prone to interpretation than a language spoken by humans, but that doesn’t mean there aren’t plenty of risks to consider. This is why we wrote a paper detailing these risks and providing steps that security and development teams can take to address them.

In Use

Developers primarily use these tools to perform one of three tasks:

- Completions

- Explanations

- Documentation

Each of these steps can result in issues. Most impactful are on the completions side. Developers may prompt the tool to write functions, complete lines of code, or even more advanced tasks.

Risks

There are a few risks associated with using LLMs applied to coding tasks. A couple of the highlights are listed below.

There are no guarantees of secure outputs

These tools can and do output vulnerable pieces of code. Examples of this have been shown by previous researchers as well as in this new paper. This lack of guaranteed security from the outputs means additional processes and tooling are necessary to ensure that vulnerable code doesn’t make it into production systems.

Consistency and Reliability Issues

How these tools make recommendations isn’t clear to the developer using the tool. Since prompts are constructed using previously written code, poor quality code written previously in the project can lead to poor quality code output from the tool. This means that even if under normal conditions the tool would have produced a secure output, the default output now becomes vulnerable.

Data leakage issues

Tools like GitHub Copilot and ChatGPT are provided in an as-a-service model. This means everything you provide to these tools via a plugin, API, or web interface is collected, possibly stored, analyzed, and reviewed by a 3rd party. It means you can lose control of your data once it’s in the hands of a 3rd party.

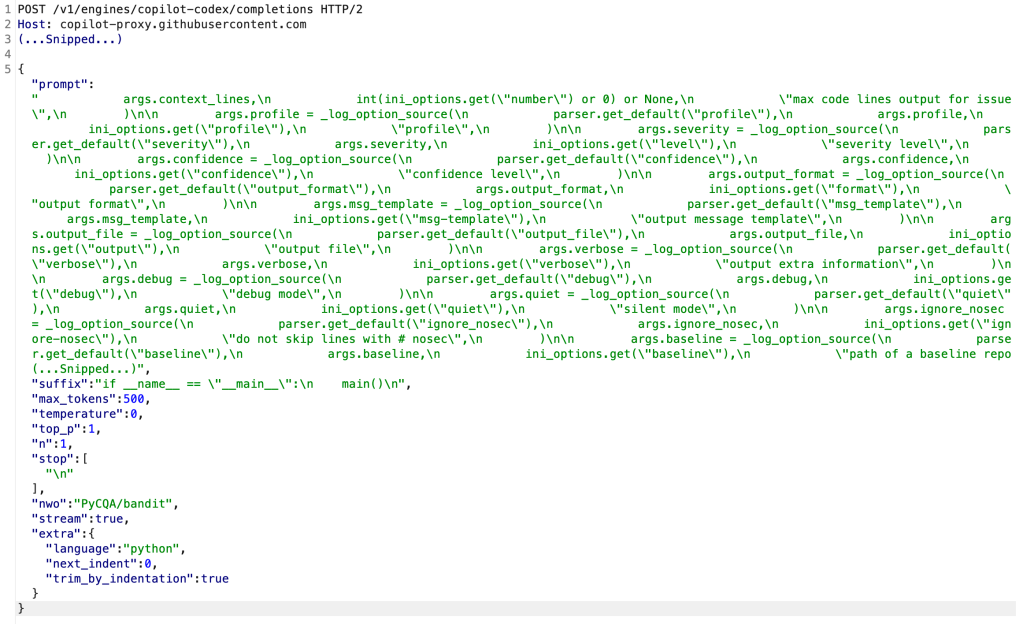

As an example, GitHub Copilot is basically a key logger running inside of the developer’s IDE. The following image shows GitHub Copilot performing prompt engineering based on a keypress inside the developer’s IDE, sending code, comments, and project metadata to a 3rd party.

Further Thought

These are just a few of the risks posed by these tools. For a larger analysis, more risks, as well as mitigation strategies, download the Kudelski Security Research paper Addressing Risks from AI Coding Assistants.

There’s a safe bet that more and more tools will be launched in the coming years focused on developers and using AI focused on coding tasks. Even though our paper focused mostly on GitHub Copilot and some on ChatGPT, the risks and mitigation strategies are more general and apply to these tools more generally.