Introduction

The 2015 DBIR report from Verizon contained a small section on mobile malware but the part on iOS said that all alerts on this platform were all false positives that were in fact triggered by Android devices (“most of the suspicious activity logged from iOS devices was just failed Android exploits”). This is great as it means that all iOS users are safe from malware! Well almost safe, since it is commonly known that the threat is coming from unofficial third party stores and as such only jailbroken devices are at risk.

The raising concern is due to several news starting at the end of 2014 and describing malicious code targeting non-jailbroken devices. The first malware to make the news were YiSpecter and XcodeGhost and more recently, the Pegasus attack leveraging the Trident vulnerabilities.

The goal of this post is to explain what can be performed on either a jailbroken or non-jailbroken iOS device.

Commonly used techniques

Infection

Non-conventional installation methods

Phishing

The main technique used by attackers to deploy applications is phishing. They lure users into installing applications using links in emails or a promotion for a specific application.

In the case of jailbroken devices, attackers can also publish their applications on third party stores and lure people into installing it instead of the official application for example.

Traffic injection

From research presented at HackInParis it seems that traffic injection is a common technique used in Asia. This can be performed quite easily on public WiFi networks or through a fake eNodeB controlled by the attackers. In this second case links are injected into the 2G/3G communications. The same can however not be performed on LTE networks since there is a mutual authentication that prevents devices from connecting to fake networks (except using downgrade attacks but in this case the communication will be performed in 2G/3G).

It also seems that Chinese ISPs infrastructures have been subject to DNS redirections attacks.

USB

Upon pairing an iOS device with a Mac or PC it is possible to use WiFi or USB connections to perform actions on the device through Apple usbmuxd service on OSX or its equivalent on Windows. This service allows to list, install or removes applications on iOS and is based on the MobileDevice library (MobileDevice.framework on OSX and iTunesMobileDevice.dll on Windows) instrumented from the libimobiledevice library. HackingTeam implant for iOS used this technique to be deployed from a compromised desktop, as is the malicious application Wirelurker.

Legitimate security tools like zYiRemoval from Zimperium are also using this technique to detect known malicious applications and uninstalling them.

Exploitation of software vulnerabilities

This last technique is less commonly used but was already seen in the wild: jailbreakme.com was the first example of such a technique. By visiting a web page a vulnerability that could be triggered through the browser (image parsing library in that case) was exploited and allowed to gain code execution into the browser sandbox. From there another kernel exploit was used in order to gain sufficient privileges to jailbreak the device.

A similar technique was recently used in the Pegasus attack: attackers used three vulnerabilities (named Trident vulnerabilities in Lookout report) in order to gain code execution, escape the browser sandbox and run code at an elevated privilege level in a persistent manner.

This type of attack is, for the moment, mostly geared toward high value targets or used by states sponsored attackers due to the technical difficulty to deploy it and the quite short lifespan once the attack is detected and patched by Apple.

Jailbroken devices

This is the easiest case for attackers as restrictions put in place by Apple are disabled (code signing requirement for all executable memory pages for example) in order to be able to install applications from unofficial stores and also perform security assessments of the system and applications. As such an attacker can deploy an application without the need to sign it using a Developer or Enterprise signing certificate.

For example iOS.KeyRaider was targeting jailbroken devices.

Standard devices

In the case of standard devices it is necessary that binaries are signed in order to be allowed to run. Two options are possible in order to get to this point: AppStore distribution through a Developer account or In-House distribution through an Enterprise account.

It should however be noted that since Xcode 7 it is possible to “self sign” applications for deployment on our own devices. This would however allow an attacker to deploy an application from a compromised macOS box with Xcode installed (which is not installed by default on OSX or macOS).

AppStore

In order to be able to deploy a malware on Apple AppStore attackers have to bypass detection methods used on submitted applications and also be able to bypass iOS sandbox (seat-belt) which is putting hard restrictions to perform malicious actions.

One of the points validated by Apple is the list of entitlements requested by the applications as such as the usage of private APIs calls. Entitlements are privileges that applications need to request in order to (in theory) have access to specific APIs (like microphone, gps, contacts, …).

As such attackers need to find holes in Apple tests and missing validations in API calls to be able to deploy a malicious application that bypasses restrictions. Both were already proven and demonstrated at security conferences or in the press and patched by Apple afterward.

In House

In house applications, i.e. signed with an Enterprise Developer certificate, can be deployed simply be following a link to a manifest.plist file from Mobile Safari. It should however be noted that a valid certificate signed by a CA installed on the device needs to be used to initiate the SSL communication to download the IPA file designed in the software-package field from the manifest.plist file.

In the case where the application is not signed by the same entity as the one used in the configuration profile pushed through a MDM, the installation process will request a user validation with a warning message indicating the name of the company that registered the Enterprise Developer Certificate. Before iOS 9 users would also have a second warning upon the first execution of the application. It should be noted that if the application was already installed and them removed the warning will not be displayed a second time upon re-installation.

Since iOS 9 the validation workflow changed and users have to manually enable the configuration profile (if not deployed through a MDM during the device’ enrollment) in the device’s settings menus before being allowed to run the application. This setting is located under Configuration > General > Profiles.

Malicious actions

Standard device

Restricted environment

Rules from iOS sandbox apply indifferently on applications signed by both Developer or Enterprise certificates and as such actions are quite limited. The main difference lies in the fact that applications signed with an Enterprise Developer certificate don’t have to pass through Apple validation process and as such can request entitlements not allowed on the AppStore or call APIs disallowed on the store. Still in both cases users are the last judges on what those applications can do since entitlements still require users’ approval (access to contact, location, microphone, healthcare information…).

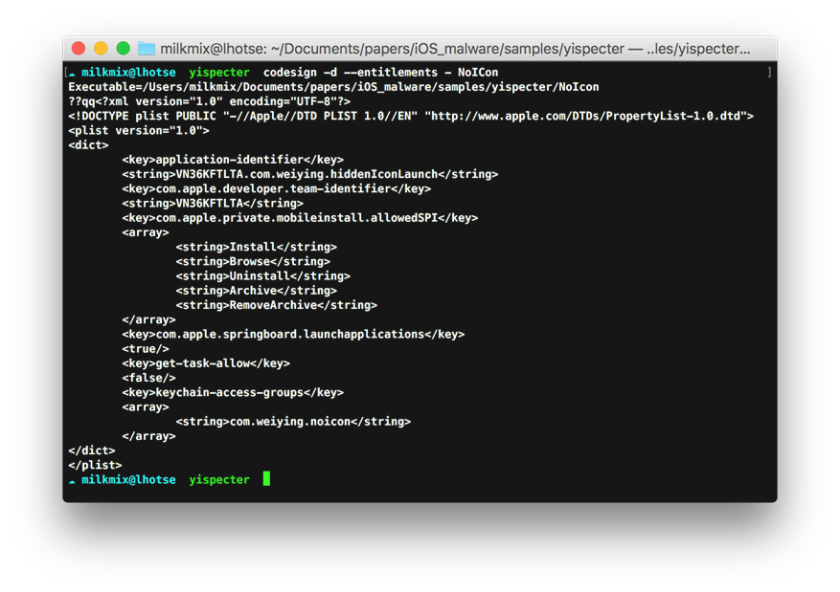

Privileges requested by an application can be listed using the codesign binary as shown bellow.

Other than access to contacts (which is considered sensitive information in many places) a malicious application could for example request access to the microphone in order to listen to the discussion during a meeting. Although this is technically feasible through the UIBackgroundModes option set to audio in the application Info.plist file, a visual indication will warn the user (blue ribbon on top of the screen). Applications that do not define this option might be suspended by the OS tasks scheduler.

Entitlements accessible to AppStore applications can be requested by developers through the developer.apple.com portal but many more do exist. They are however reserved to Apple and its partners as it was the case for the JunOS pulse application that was able to establish a VPN tunnel before this feature was officially available for AppStore applications.

Keylogging

Since iOS 8 it is possible to develop extensions and distribute them on the AppStore. They allow to offer an alternative keyboard like on Android or perform filtering on visited URLs (add-blocker for example). The first type is quite interesting from an attacker’s perspective as it would allow to write a keylogger for iOS.

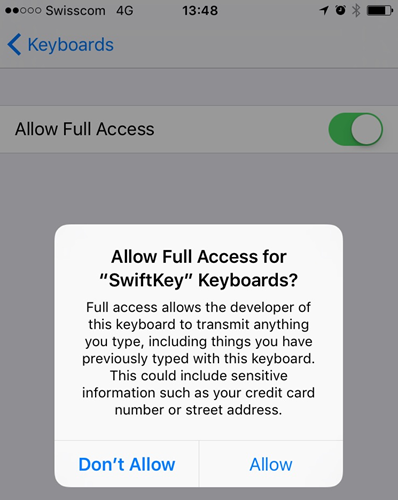

Apple initially announced two restrictions to prevent this type of attack:

- 3rd party keyboards are disabled when users are entering text in input fields marked as Secure

- extension keyboards are isolated from their main applications and cannot interact with the filesystem or the network

There is however an option named NSExtensionAttributes::RequestOpenAccess that can be set by developers and allow keyboards to access the network API. One of HackingTeam implant is using this technique to perform keylogging activities on infected devices.

It should however be noted that if this option is enabled on an application published on the AppStore Apple can perform more in-deep validation of its code. Users also need to manually enable such feature by going to the following configuration menu: Settings > General > Keyboards > Keyboards as depicted bellow with the example of the SwiftKey keyboard that uses the connection to perform analytics.

Private API

In the agreement contract that developers signed while entering the Developer program, it is noted that access to private APIs is strictly forbidden and validations are put in place to detect attempts. Several cases proved that it was possible to bypass those and that the technique was actively used by malicious applications. Using private APIs does not allow to bypass iOS sandbox restrictions (well, shouldn’t).

Private APIs are all functions not specifically defined by Apple in the SDK header files. It is possible to retrieve lists of the private APIs using either classdump-dyld on a jailbroken device or by browsing Nicolas Seriot project.

An easy but not stealthy method to call private APIs is to directly call them if they are exported as C functions or use dlopen/dlsym.

Another method is to use the reflection property of the Objective-C language and use the textual representation of classes and their methods. For example, calling [LSApplicationWorkspace allApplications] method is translated by the compiler into the following code.

Class lsapp = objc_getClass("LSApplicationWorkspace");

s1 = NSSelectorFromString(@"defaultWorkspace");

NSObject* workspace = [lsapp performSelector:s1];

s2 = NSSelectorFromString(@"allApplications");

NSLog(@"apps: %@", [workspace performSelector:s2]);

Loading a framework and calling a method can be performed through calls to [NSBundle bundleWithPath], [NSBundle NSClassFromString] and objc_msgSend*. I described those point in the article on iOS applications reverse engineering published here.

As written above some parts of the private APIs are regulated by entitlements not authorized on the AppStore such as com.apple.CommCenter.fine-grained which allows access to the CoreTelephony class and perform the following actions:

- retrieve notifications upon reception or sending of a text message

- retrieve notifications when a phone call is performed

- retrieve IMSI and IMEI information

- …

Applications installation

Once an application is installed on the device using the methods described above, it is able to deploy others using two methods:

- ask installd to perform the work

- use the [MobileInstallation MobileInstallationInstall] private API if the application posses the com.apple.private.mobileinstall.allowedSPI entitlement defined to Install (like the example from YiSpecter shown above)

The first method is based on a click on a URL using the itms-services:// handler which is handled by the installd daemon:

itms-services://?action=download-manifest&url=<url to plist>

The second one relies on an entitlement disallowed on the AppStore but that can be used by applications signed by an Enterprise Developer Certificate. To retrieve entitlements from a binary we can extract them from the LC_CODE_SIGNATURE load command either using sed and searching for and tags or simply using the codesign binary:

$ codesign -d --entitlements - NoICon Executable=/Users/.../samples/yispecter/NoIcon ??qq<?xml version="1.0" encoding="UTF-8"?> <!DOCTYPE plist PUBLIC "-//Apple//DTD PLIST 1.0//EN" "http://www.apple.com/DTDs/PropertyList-1.0.dtd"> <plist version="1.0"> <dict> <key>application-identifier</key> <string>VN36KFTLTA.com.weiying.hiddenIconLaunch</string> <key>com.apple.developer.team-identifier</key> <string>VN36KFTLTA</string> <key>com.apple.private.mobileinstall.allowedSPI</key> <array> <string>Install</string> <string>Browse</string> <string>Uninstall</string> <string>Archive</string> <string>RemoveArchive</string> </array> <key>com.apple.springboard.launchapplications</key> <true/> <key>get-task-allow</key> <false/> <key>keychain-access-groups</key> <array> <string>com.weiying.noicon</string> </array> </dict> </plist>

The new application can be hidden from the SpringBoard by adding the hidden option to the SBAppTags dictionary from the application’s Info.plist.

Configuration profiles

Another way to perform malicious actions or rather retrieve sensitive information from an iOS device is by deploying a configuration profile on it. This method is frequently used by security engineers in order to set a proxy and install a CA in order to intercept traffic from applications not relying on certificate pinning.

An attacker can also use this method by luring users into installing his profile and as such define a proxy or VPN under his control and deploy a CA to intercept the traffic.

Jailbroken device

Code injection and hooking

On a standard (i.e. non-jailbroken) device processes do not have sufficient privileges in order to access other processes address spaces due to the restrictions in place. This changes in the case of a jailbroken device since it is possible to run applications with higher privileges. Moreover it is also possible to perform calls to the vm_map_protect function requesting memory pages with RWX rights which is forbidden by iOS (except for Mobile Safari in order to perform JIT on javascript code). This specificity is used by code injection tools such as Frida.

Since applications can be ran with root privileges they are also able to modify configuration files system wide .

Malicious code running on jailbroken devices are using these points in order to hook methods in legitimate processes and steal sensitive information from them. Wirelurker used code injection and hooking to retrieve data send using SSLWrite and extract AppStore credentials. In this case the MSHookFunction method of Cydia Substrate was used to perform swizzling. It also had the advantage of not requiring RWX memory pages since swizzling is based on ObjectiveC reflection property.

Injecting code into other processes could also allow to steal the secrets that they store into the KeyChain, retrieve decrypted text messages or audio from encrypted communications, or bypass local authentication request performed using [LocalAuthentication evaluatePolicy].

Application installation

On jailbroken devices attackers can use more stealthy attacks since it is possible to deploy applications using the com.apple.afc2 service to copy binaries and start them automatically as LaunchDaemon. This technique was used by Wirelurker to deploy itself from a compromised PC/Mac once the device was connected to it by USB.

Conclusion

As depicted in this post, iOS malware is a reality but not yet widespread for the moment. We could however envision that attacks such as Pegasus become more present for high value targets but this can only be validated or not when it will (not) happen :)